Risk assessment frameworks for AI features

Effective risk assessment helps teams identify potential AI harms before they affect users. Start with cross-functional brainstorming to identify specific risks across different user groups. For example, a content recommendation system might reinforce harmful stereotypes.

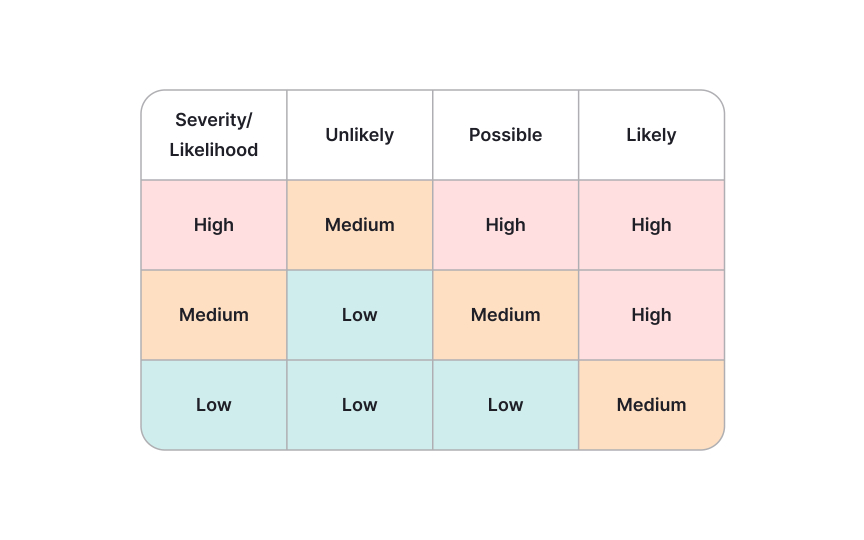

Create a simple 3×3 matrix rating each risk on severity (low/medium/high) and likelihood (very unlikely/likely/very likely). This focuses attention on high-severity, high-likelihood issues needing immediate action. For an e-commerce recommendation AI: "Recommending out-of-stock products" might be high-likelihood/medium-severity, "Showing inappropriate products to minors" could be low-likelihood/high-severity, and "Consistently recommending more expensive alternatives" might be medium-likelihood/medium-severity.

Develop specific countermeasures for priority risks. Technical safeguards might include content filters or confidence thresholds. Policy measures could involve human review requirements. For instance, if your chatbot risks generating inappropriate responses, implement both filtering technology and clear user reporting paths. Test your protections through realistic scenarios that intentionally try to trigger identified risks. This reveals gaps in your mitigation strategies before users encounter them.